Conferences, events and translations are the real three musketeers – they need each other. Often, event participants and speakers use different languages, so the primary task of the organiser is to ensure that everyone understands the content. Until now, the matter was quite simple and the technology was well known. Since the speaker and the simultaneous interpreter were talking at the same time, without interruption, the audience had to be provided with comfortable conditions to receive the message via personal headsets. As a result, they could follow the interpreting, focusing only on the sound sent to the headsets. It also meant that the speaker was not disturbed by the voice of the interpreter. Therefore, simultaneous interpreting sessions require the use of equipment that supports parallel communication between the speakers, interpreters and listeners. During a typical session, each listener receives a radio receiver along with a headset for listening to the translated audio. Interpreters, on the other hand, use equipment that allows them to simultaneously listen to the speaker's voice and translate their words to the audience through a microphone. There are several proven technologies available on the market that have been in use for years. They all function in a similar way and we are already very familiar with their use.

In the era of the coronavirus pandemic, however, the world of events has been turned upside down. Virtual conferences have become as natural for us all as traditional offline ones. So how did the online world deal with the challenge of ensuring the same translation conditions during virtual events?

Simultaneous interpreting at online conferences

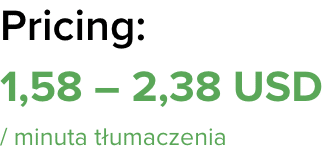

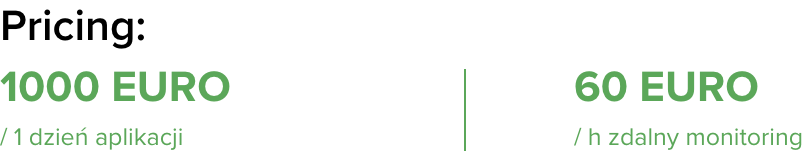

During the pandemic, several companies developed technologies to allow simultaneous interpreting for online conferences. In most cases, these applications are based on WEB-RTC, A/V-over-IP or streaming internet protocols, which are able to support audio/video communication between devices via the internet, wi-fi and cellular networks. Currently, these applications can be deployed using mobile devices. They do not require any special equipment for simultaneous interpretation – interpreters can operate them through standard laptops, and listeners can receive them on personal smartphones that act as receivers (so-called BYOD technologies). Moreover, with these systems, simultaneous interpreters can either operate on-site or be connected remotely, whereas in the traditional model they have to be present at the event. A prerequisite for success in this regard is ensuring a stable internet connection.

Will computers replace human translators?

Automatic speech recognition technology is constantly evolving and the performance of dictation software is now impressive. In the field of automatic translation, spectacular progress has also been made. The combination of these technologies will potentially enable quasi-simultaneous speech translations, but the quality of its results will remain well below that of human simultaneous interpretation due to errors in speech recognition, semantic interpretation and voice synthesis. With the Polish language, we will definitely have to wait until the speech recognition and translation functions are at a satisfactory level. Over time, such options may be used for specialised purposes and circumstances, but they are less likely to be used at events where a natural style of communication is important. No matter how advanced the technological development is, simultaneous interpreters will always be needed in certain market segments, in particular in political speeches, business conferences and media debates. This is confirmed by the fact that the devices used to provide interpretation have traditionally relied on infrared or bi-directional radio technologies and have remained almost unchanged in recent decades, except for miniaturisation and the evolution from analogue to digital audio.

However, internet-based solutions, using smartphones or laptops, have recently been introduced to the market. They are still not yet widely known, but the pandemic has significantly accelerated their implementation. In specific circumstances, such as fully remote conferencing, they can be easier to use thanks to the need for less equipment and consequently the reduction in costs compared to traditional systems.

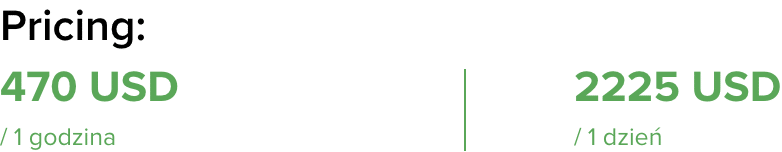

A special case is hybrid conferences that require a combination of two technologies: the use of standard equipment, as in the case of ordinary conferences, and also the implementation of online tools, thanks to which remote speakers and participants will have the opportunity to hear the translation. This combination of two types of technology generates the need to have much more equipment and human resources than you might think.

For a better picture, let's look at two situations: a fully online conference and a hybrid one.

For an online event, using a translation platform or application, hardly any equipment is needed. The remote interpreters use their own laptops, microphones and loudspeakers. Plus, speakers and participants have the option to choose the desired language. Of course, even with a fully online event, you can go a step further and use certain tools for mixing video and audio. That gives us the possibility to have a more interesting visual presentation of the content, and having the interpreters on-site also means that we remove the risk of losing the connection, having poor audio quality or experiencing long delays.

Fill in the form and get a recommendation of possible solutions for your event

For a hybrid conference, we have to provide interpreters, booths, equipment for the people physically present at the event and the technology that allows us to combine the traditional translation system with platforms or applications for speakers and remote participants. At this point, we are often surprised at how much additional equipment we need, and above all, how important the role of the person is who has to watch over the proper operation of the entire system. They have to control the entire process and all audio tracks, of which there are many. Even in a seemingly simple situation, when we translate into English and Polish, we have several input tracks for the audio channel: from the original language of the speakers on-site, through the online presenters, to the English and Polish interpreters. The job of the sound engineer is to mix the audio correctly to get the output tracks right:

- a track with an audio mix of remote and local speakers for translators

- a streamed audio track with the English translation

- a streamed audio track with the translation into Polish

- an audio track with an untranslated mix for local participants

- two audio tracks with translation into Polish and English – for the listening system and local participants

- an audio track with the English translation for remote speakers

- an audio track with the Polish translation for remote speakers

The number of overlapping tracks and the multitude of combinations requires the use of advanced mixers, with the option of extensive routing of audio tracks and additional interfaces. Above all, it also requires the sound engineer to have great skills, technical expertise and considerable experience.

Experience and research has confirmed that even with the best image quality and refined content, the event will not be a success if the sound quality is poor. Many people have undoubtedly experienced unpleasant reverb or sound distortion that made it impossible to understand the content. When information is being broadcast, the audio is more important than the images we see. It is vital to take care of the sound quality, and it definitely should not be neglected. What’s more, it’s also much more challenging than producing the visual side.

Subjective overview of some simultaneous online translation tools

ABLIO

Ablioconference is a web-based software solution which interpreters and event organisers run on standard computers while the audience listens to the audio translations on their own smartphones. Unlike other similar solutions, Ablioconference doesn't necessarily rely on existing wi-fi networks as they can provide unpredictable or inappropriate levels of performance. Instead, it allows you to create your own dedicated network. Thanks to the patented Ablio system, this network can simultaneously and effectively support hundreds of users via just one router. It can run as a cloud application or be deployed locally without the need to use the existing internet connectivity. Moreover, it can be operated in conjunction with any conferencing platform to provide the same simultaneous interpretation services for web sessions and conferences. Event organisers can configure, test and manage the service flow through online dashboards. They can also use their own preferred interpreters or choose and engage them using a large community.

KUDO

This is not only a remote translation tool, but also an event platform. The software doesn’t need any additional external tools like Zoom or Teams. Kudo, like any video chat application, allows you to share the screen, transfer documents and messages between meeting participants, and equip the app with an interface for participants and interpreters. The most important features of the interpreter interface are:

- configuration of the output channel (language)

- selection of the output language.

- setting the volume of the input and output language

- possibility of muting

- ability to switch between several video streams

- handover function – enabling smooth changes between translators

INTERACTIO

This is a simultaneous translation app that allows you to connect a speaker, interpreter and viewers remotely. Everyone can be at home and communicate as if they were in the same room. The advantage of this solution is its simplicity. How does it work? The app is downloaded both by speakers, interpreters and conference participants. Everyone has a role assigned to them in the application. The signal goes from the speakers to the interpreters, who hear and see the speaker and translate their words in real time, which is immediately communicated to the conference participants. Transmission delay is only 1-2 seconds. Thanks to apps like Interactio, there is no need to rent booths and other equipment, thus saving on transport costs and the stay of interpreters at the conference. All you need is a laptop, a microphone and a stable internet connection (3G/4G/LTE). The conference organisers should secure the appropriate internet speed in the place where the speaker is: about 120 kb/s per recipient and 1 Mb/s for the broadcasting computer. The Interactio system can handle up to 50,000 participants.

Zoom

The web platform for meetings and conferences now also offers an interpretation function. It is available as part of the Pro plan with the option of adding webinar videos. The conference host can enable this feature when they need to create an additional audio channel for the language which the interpreter is assigned to. The meeting participants can then select the channel (language) they want to listen to. The only thing that is missing from Zoom, which sets it apart from the dedicated RSI platforms, is the interpreter handover feature. The interpreters cannot hear each other, which really complicates the handover process. What it means is that the interpreters have to connect with each other via an external channel (e.g. Messenger or Skype) and keep the call open throughout the conference so they can hear each other and ensure a smooth handover. Another option is to connect to the Zoom conference from another device as a participant and listen to the interpreting channel. On its website, Zoom reports that the interpretation feature is still being tested, which means errors are inevitable. Zoom also has a mobile app that you can use for interpretation purposes.

Ever since the coronavirus caused conferences to be moved to the virtual space, everything has become more complicated, including proven translation methods that have been practised for years. The realities of the pandemic forced technology to adapt to the requirements of online events and, above all, the most complex hybrid conferences combining the traditional and virtual space. When using the array of tools that facilitate the efficient running of online events, it is important to remember that concern for the visual side of the event should not obscure the key issue that ensures it is well received, namely, the sound quality. The provision of comfortable simultaneous interpreting should be number one on your event priority list.